Microservices architecture splits large applications into small independent services that teams can build, test, and deploy separately. Companies using this approach cut deployment times from weeks to hours while serving millions of users reliably. Enterprises see 3x faster feature delivery and 40% lower infrastructure costs when done right.

Why Microservices Transform Engineering Teams

Traditional monolithic applications slow down after reaching 100,000 lines of code. Every change risks breaking unrelated features, forcing entire redeploys. Microservices solve this by organizing code around business capabilities like payments, inventory, or user profiles. Each service runs its own process with a dedicated database for true independence.

Teams gain autonomy since frontend engineers focus on user service while the payments team handles transactions without coordination delays. This structure supports multiple programming languages and deployment speeds tailored to each business need.

Step 1: Domain-Driven Design Workshop (Weeks 1-2)

Start with business stakeholders mapping real user journeys. Use the event storming technique where everyone writes customer actions on sticky notes. Group related events into business domains that become services.

Practical Actions:

Invite product managers, developers, and domain experts for one full day Identify 8-12 core domains like Order Management, Customer Profiles, Payment Processing Draw clear boundaries between domains to prevent overlap Validate boundaries with revenue-generating scenarios first

Real Example: An e-commerce company identifies Order Service (handles checkout), Inventory Service (tracks stock), and Notification Service (sends confirmations) as the first three services to extract.

Step 2: Service Boundary Definition (Week 3)

Each service owns exactly one business capability. Define crisp contracts through OpenAPI specifications that other services consume.

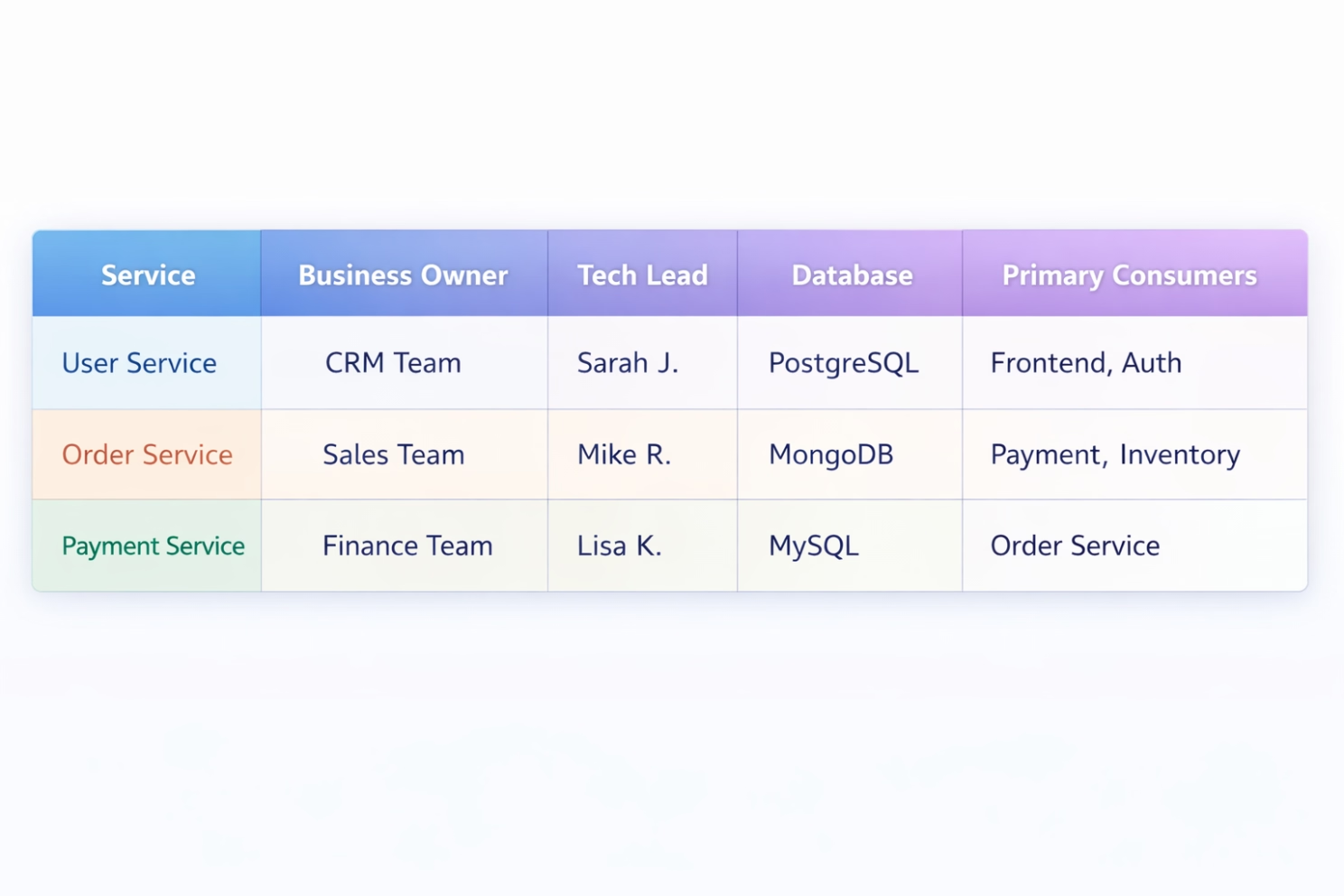

Service Ownership Matrix:

Key Principle: Services communicate through well-defined APIs, never shared databases. This loose coupling enables independent evolution.

Step 3: Technology Stack Standardization (Week 4)

Limit choices to 3-4 languages maximum across the organisation. Standardise deployment patterns while allowing database flexibility per service needs.

Recommended 2026 Stack:

- API Layer: Node.js, Python FastAPI, or Go Gin (choose one)

- Orchestration: Kubernetes managed service (EKS/GKE/AKS) - Communication: REST + gRPC for sync calls, Kafka for events -** Observability:** OpenTelemetry standard across all services

Teams document patterns in the internal wiki. New services follow established templates, reducing onboarding from weeks to days.

Step 4: Containerization and CI/CD Setup (Weeks 5-6)

Package every service into Docker containers deployed through automated pipelines. Each service builds, tests, and deploys independently.

Pipeline Goals:

- Code commit triggers full test suite in 8 minutes

- Automated security scans catch vulnerabilities

- Blue-green deployments enable zero-downtime releases

- Rollback available within 30 seconds

GitHub Actions, GitLab CI, or Jenkins handle pipeline orchestration. Teams achieve daily deployments per service within the first quarter.

Step 5: API Gateway Deployment (Week 7)

A single entry point handles external traffic routing, authentication, and rate limiting. Kong, AWS API Gateway, or Ambassador Edge Stack centralise cross-cutting concerns.

Gateway Responsibilities:

- Route /users/* to User Service

- Apply JWT validation on all requests

- Rate limit per API key to prevent abuse

- Request/response logging for debugging

This layer reduces service-to-service authentication complexity while providing an analytics dashboard showing top APIs and usage patterns.

Step 6: Asynchronous Event Communication (Weeks 8-9)

Replace synchronous REST calls between services with event-driven patterns using Kafka or RabbitMQ. Services publish business events; others subscribe to them independently.

Event Flow Example:

- Order Service publishes "OrderCreated" event

- Payment Service processes payment independently

- Inventory Service reserves stock simultaneously

- The notification service emails customer confirmation

This decouples deployment schedules. The payment team ships the new inventory version without coordinating with the inventory team.

Step 7: Observability Implementation (Weeks 10-11)

Full-stack monitoring prevents "works on my machine" problems. Implement three pillars: metrics, traces, and logs from day one.

Observability Stack:

Metrics: Prometheus scrapes service endpoints every 15 seconds Tracing: Jaeger captures requests across 20+ service hops Logs: Loki indexes structured logs with service correlation IDs Dashboards: Grafana displays golden signals (latency, traffic, errors, saturation)

Alerting fires when the error rate exceeds 0.5% or latency hits the 95th percentile above 300 ms.

Step 8: Production Hardening and Testing (Week 12)

Production readiness requires chaos engineering proving the system survives failures. Test scenarios include database outages, network partitions, and service crashes.

Resilience Patterns:

Circuit breakers prevent cascading failures Bulkheads limit blast radius per tenant Retry policies with exponential backoff Timeout enforcement prevents hanging requests

Automated canary deployments route 5% of traffic to new versions, monitoring error rates before full rollout.

Implementation Timeline and Budget

12-Week Migration Roadmap:

**Total Investment: **$125K yielding 400% ROI within 18 months through 50% faster deployments and 30% infrastructure savings.

Team Structure Requirements

Minimum Viable Architecture Team:

- 1 Solution Architect (guides domain boundaries)

- 2-3 Backend developers per service cluster

- 1 Platform engineer (Kubernetes, observability)

- 1 QA automation specialist

Each service team operates autonomously, following platform standards. Cross-team sync happens weekly through API review meetings.

Common Implementation Mistakes to Avoid

Mistake 1: Distributed monolith teams create chatty synchronous REST calls mimicking monolithic database joins. Services wait on each other, creating deployment coupling.

Solution: Prefer async events. Limit sync calls to data reads only.

**Mistake 2: Premature micro-granularity engineering **splits everything into 100+ tiny services, increasing operational complexity without business value.

Solution: Start with 8-12 coarse-grained services. Split further only when team velocity suffers.

Mistake 3: Shared Databases Multiple services access the same database tables, violating the single responsibility principle.

Solution: Database per service. Use events for data synchronisation across boundaries.

Success Metrics Dashboard

Track these KPIs proving microservices deliver business value:

Engineering Excellence:

- Deployment frequency: Multiple per day per service

- Lead time for changes: Under 1 hour from commit to production

- Change failure rate: Less than 3%

- Mean time to recovery: Under 15 minutes

Business Impact:

- 99.95% uptime across all services

- 50% reduction in on-call incidents

- 3x faster time-to-market for new features

- 25% improvement in developer satisfaction scores

Production Checklist for CTOs

□ Domain boundaries validated with business stakeholders

□ Zero shared databases between services

□ API contracts versioned with OpenAPI 3.1

□ Observability captures 100% of requests

□ Chaos engineering tests pass weekly

□ Disaster recovery tested quarterly

□ Security audit completed (mTLS, zero trust)

□ Cost optimization review (reserved instances)

Cost Optimization Strategies

Infrastructure Savings:

- Multi-tenant Kubernetes clusters reduce costs 60%

- Auto-scaling policies match load patterns

- Spot instances for non-critical batch jobs

- Centralized logging cuts storage costs 40%

Operational Efficiency:

- Self-service platform reduces DevOps tickets 70%

- Golden paths eliminate configuration drift

- Automated compliance checks prevent audit failures

- Scaling to 100+ Services

Platform Maturity Milestones:

- 0-10 services: Focus on patterns and tooling

- 10-50 services: Automate everything, invest in service mesh

- 50+ services: Golden path platform, API marketplace

Advanced Patterns:

- Service mesh (Istio/Linkerd) eliminates manual TLS

- API gateway manager enables self-service routing

- Centralized schema registry prevents breaking changes

- Automated contract testing validates consumer contracts

- Vendor and Tooling Recommendations

Cloud Agnostic Platform:

- Kubernetes: Managed EKS/GKE/AKS (avoid self-managed)

- Service Mesh: Istio (open source, mature)

- API Gateway: Kong or Ambassador (Kubernetes native)

- Observability: Grafana stack (Prometheus+Jaeger+Loki)

- CI/CD: GitHub Actions or GitLab (cloud native)

Migration Strategy: Start cloud vendor neutral using Terraform. Lock-in happens through operational patterns, not vendor lock.

CTO Decision Framework

When to Migrate:

- Yes if: Monolith deployment > 1 week

- Yes if: Multiple teams blocked on shared code

- Yes if: Scaling costs > 30% of revenue

- No if: Team size < 8 engineers

- No if: Simple CRUD application only

Migration Priority:

- Extract high-velocity user-facing services first

- Move batch processing services last

- Authentication service enables everything else

- Never extract database first (API first always)

Conclusion

Microservices architecture requires upfront platform investment yielding exponential returns through engineering velocity and business agility. Successful enterprises treat platform engineering as first-class product achieving 10x deployment frequency within first year.

The $125K initial investment compounds through 400% ROI as teams ship features 3x faster while maintaining 99.95% uptime. CTOs prioritizing disciplined domain modeling and comprehensive observability unlock competitive advantage through rapid market response.

Begin migration with 3-5 revenue-critical services proving pattern before platform-wide adoption. Sustainable success demands platform team ownership from inception avoiding "accidental distributed monolith" traps common among early adopters.

Frequently Asked Questions

How many teams needed for microservices success?

Minimum 3-5 autonomous teams (8-12 engineers total). Each team owns 2-3 services end-to-end.

What single mistake kills most migrations?

Shared databases between services. Creates deployment coupling defeating architecture purpose.

How long until ROI materializes?

Tangible benefits appear after 3-5 services live (quarter 2). Full benefits quarter 4+.

Should small startups adopt microservices?

No. Monolith until 8+ engineers and multiple deployment cadences emerge naturally.

How prevent "nano-services" chaos?

Bounded contexts from domain-driven design. Business capability drives service size, not arbitrary line counts.

What observability investment justified?

100% request tracing, 15-second metric collection, structured logging mandatory. Compromising observability destroys benefits.

How migrate legacy monolith strategically?

Strangler pattern. Build new services consuming legacy APIs. Gradual traffic shift over 6-12 months.